281: Our Thought Dreams Can Now Be Seen

Confer.to and Authority as a Design Problem

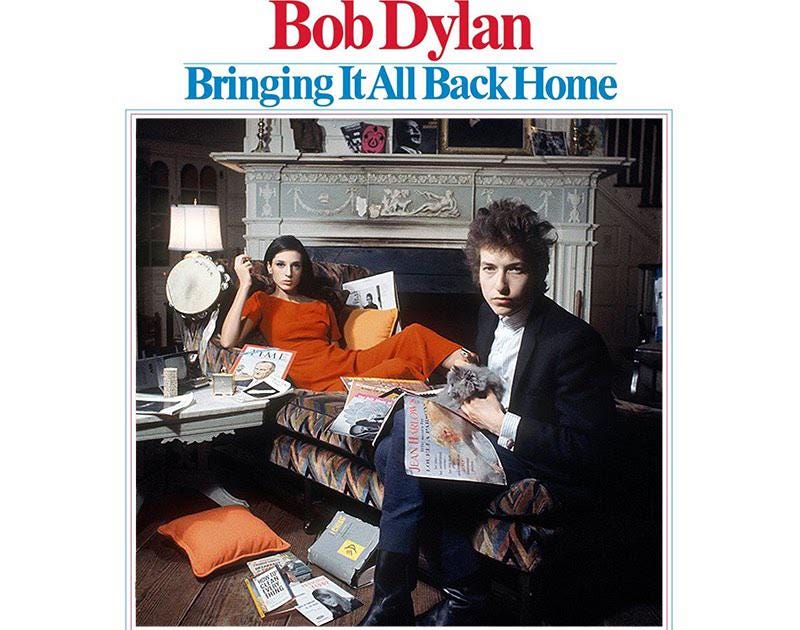

“If my thought-dreams could be seen

They’d probably put my head in a guillotine.”

When Bob Dylan wrote that line, the premise was hypothetical. Exposure was exceptional. Interiority was presumed intact.

That presumption has collapsed.

Today, thought-dreams are typed into machines. Drafts. Doubts. Strategic calculations. Unfinished arguments. Private fears. We are building systems that do not merely respond to thought but capture it as input. The guillotine, if it exists, is no longer theatrical. It is infrastructural.

This is the political landscape in which confer.to should be understood.

Confer is the latest project of Moxie Marlinspike, best known for founding Signal. With Signal, encryption is normalized for everyday communication. The mission clear: private speech should not depend on trusting a provider. It should be guaranteed by design.

Confer extends that wager from communication to cognition.

At the surface, confer.to is an AI chat interface. You type. It replies. It drafts, explains, brainstorms. It looks familiar.

What distinguishes it is architectural. Prompts are encrypted client-side. Computation occurs in secure enclaves. Conversations are not stored in retrievable form. They are not folded back into training data. The system is structured so that the operator cannot inspect or accumulate your cognitive traces.

This is not a branding choice. It is a jurisdictional claim.

AI systems are no longer merely tools. They are becoming cognitive environments: places where people think in ways not previously possible. We rehearse conversations. Test arguments. Explore dissenting impulses. Refine positions we may never publicly defend. These interactions are provisional. They are internal.

When those exchanges are logged and retained, authority shifts upstream. Historically, power intervened at the level of speech and behavior. Now it can operate at the level of deliberation itself.

Confer’s design is a refusal of that shift.

It treats prompts not as user data but as interior space. Where some see data as something to be optimized, we should agree that interior space is something to be protected.

Most AI systems improve by accumulation. They learn from use. They refine themselves by absorbing the traces of interaction. Memory becomes a feature. Personalization becomes leverage. The system models you while remaining opaque to you. Over time, asymmetry hardens into authority.

Confer declines that compounding advantage.

By refusing to train on user prompts, it gives up one of the central engines of AI improvement. It accepts bounded growth in exchange for bounded power. It refuses to turn cognition into capital.

That trade-off challenges the dominant narrative of legitimacy in technology: optimization justifies access. We are told that more visibility produces better systems, and better systems warrant deeper intrusion. Confer interrupts that logic.

The deeper move is this: it replaces trust with impossibility.

Contemporary authority asks to be trusted. It offers transparency reports, ethics boards, compliance frameworks. It promises restraint. Confer assumes restraint is inadequate. The only reliable limit on power is structural incapacity. If a system cannot access something, it cannot exploit it.

Authority becomes a design problem.

It asserts that legitimacy does not arise from good intentions but from enforced limits. A system that can see everything does not need to justify itself. A system that cannot see certain things restores the need for explanation.

The political significance extends beyond a single platform.

As AI embeds itself into workplaces, classrooms, and governance, the line between assistance and surveillance erodes. Employers already monitor productivity. Platforms already track behavior. If AI tools also monitor how people reason, especially their hesitations, their dissents, or their speculative thinking, then the last buffer between authority and interior life narrows dramatically.

Confer does not dismantle that broader infrastructure. It does something quieter. It marks a boundary. It encodes a claim: private thought is not raw material, even in the name of progress.

Our thought-dreams can now be seen.

The danger is not spectacle, but normalization. If visibility of deliberation becomes standard practice, authority no longer needs to wait for speech or action. It can anticipate. It can preempt. It can shape decisions before they harden into positions.

Dylan experienced exposure as a catastrophe. Today it is a default setting.

Confer.to significance lies in the boundary it draws. It insists that some parts of human life must remain structurally beyond reach, not because authority is always malicious, but because authority without limits ceases to require legitimacy.

Authority is not only what governs us. It is what we allow to look inside.

P.S. We’ve got a Metaviews Signal group that provides a space to discuss our work, your work, and the world we find ourselves in. The vast majority of members lurk, but a few of us employ the platform to entertain, engage, maybe enlighten, or maybe enrage 🤷🏼♂️. Email metaviews@gmail.com to apply or communicate with us outside of the liking, commenting, and sharing we regularly ask for in exchange for the labour we provide… 👇🏼

Enable 3rd party cookies or use another browser